Laravel: Avoiding the “who caches this first”

Let me tell you a story of multiple processes caching the same thing, over and over again

Some months ago, I created a project for a client that scaled from one to around 5~10 processes at the same time. I’ll save you the details and just get straight to the point: these had to make a complex query based on the result of an external API, which were both slow, and stalled all processes.

As you can guess, I cached the result. The problem? The processes still stalled for several seconds. Did the cache not work?

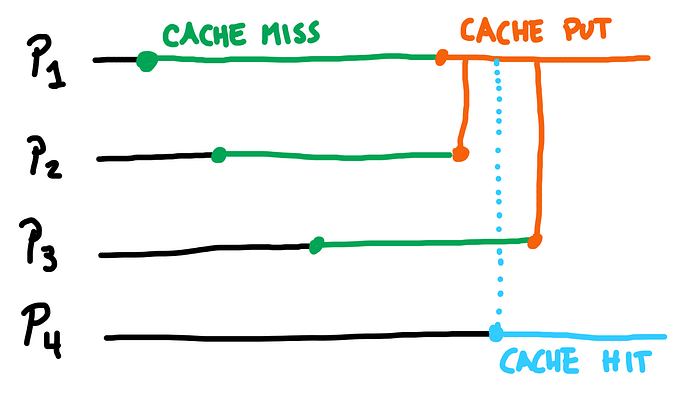

The problem was not the cache itself, but rather the procedure. Since these processes could start just milliseconds away from each other, all of them will miss the cache until it was populated. In other words, all the processes would execute the same HTTP Request and the same query and save it to the cache.

Luckily for us, Laravel has “atomic” locks for cache handling, that would help around this problem.

Locking for me, unlocked for everyone else

The cache in Laravel, as long your driver supports it, contains a “locking” system. What it does is very simple: when it sets a value as locked, it returns true.

Why it returns a boolean? Because if you call it again, you will receive false as its already locked.

You can also release the lock. This will allow the value to be locked again by anybody else, like another process.

The above logic can be replicated with anything, but there is a neat trick for using this lock: you can “wait” for someone to release it, or “fail” if you think you have waited long enough, instead of just raw polling the cache like there was no tomorrow.

To do this, we can use the block() method, which accepts a callback to execute once the lock has been acquired.

We can use this to our advantage to avoid multiple processes to cache the same data, by only letting the first to do it while the other processes wait until it is stored, instead of bursting the server with load for a result that will be the same.

One caches, the rest waits

The logic for this is really simple:

- We will acquire the lock for a given value, and, once is acquired,

- we will check if the cache has the data to return,

- otherwise we will retrieve the data and store in the cache.

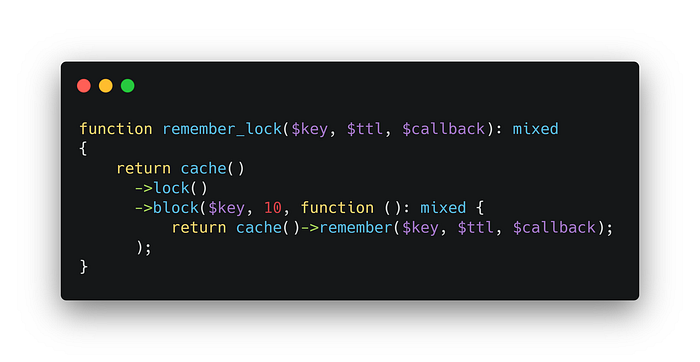

The first step can be resumed using block(), which accepts a callback to execute if the lock is acquired before it timeouts.

The second and and third steps are basically the remember() method, which checks if the cache data exists, or executes a callback to retrieve and store the data, returning it to the developer.

Wrapping everything up, we end up with something like this:

This function kills two birds with one stone:

- The first process that acquires the lock will execute the callback, which will retrieve and store the data.

- The next process will wait to acquire the lock, and once done, the

remember()will return the cached data.

This seems like an edge case to be included in the Framework, but for caching data that is computationally expensive (a complex SQL query) or taxing (a slow HTTP Request), you may want to avoid multiple processes doing the same.

I decided to make this logic part of my RememberableQuery package for Laravel. If you’re looking to remember queries in the cache with just one method, give it a chance.